How to Migrate from Vercel to GCP

Move your backend off Vercel and onto your own Google Cloud account

Vercel has grown into a full backend platform, but the infrastructure underneath is still managed in their cloud account. With AI agents compressing the barrier to getting code running, the value of a deployment platform shifts. What matters more now is what comes after: infrastructure you control, costs you can predict, guardrails that hold up as the codebase grows.

As your backend grows on Vercel, more of your infrastructure sits in someone else's cloud account. You get their dashboard, their pricing, and their limits on what you can configure. Your compliance scope includes a third party managing infrastructure on your behalf.

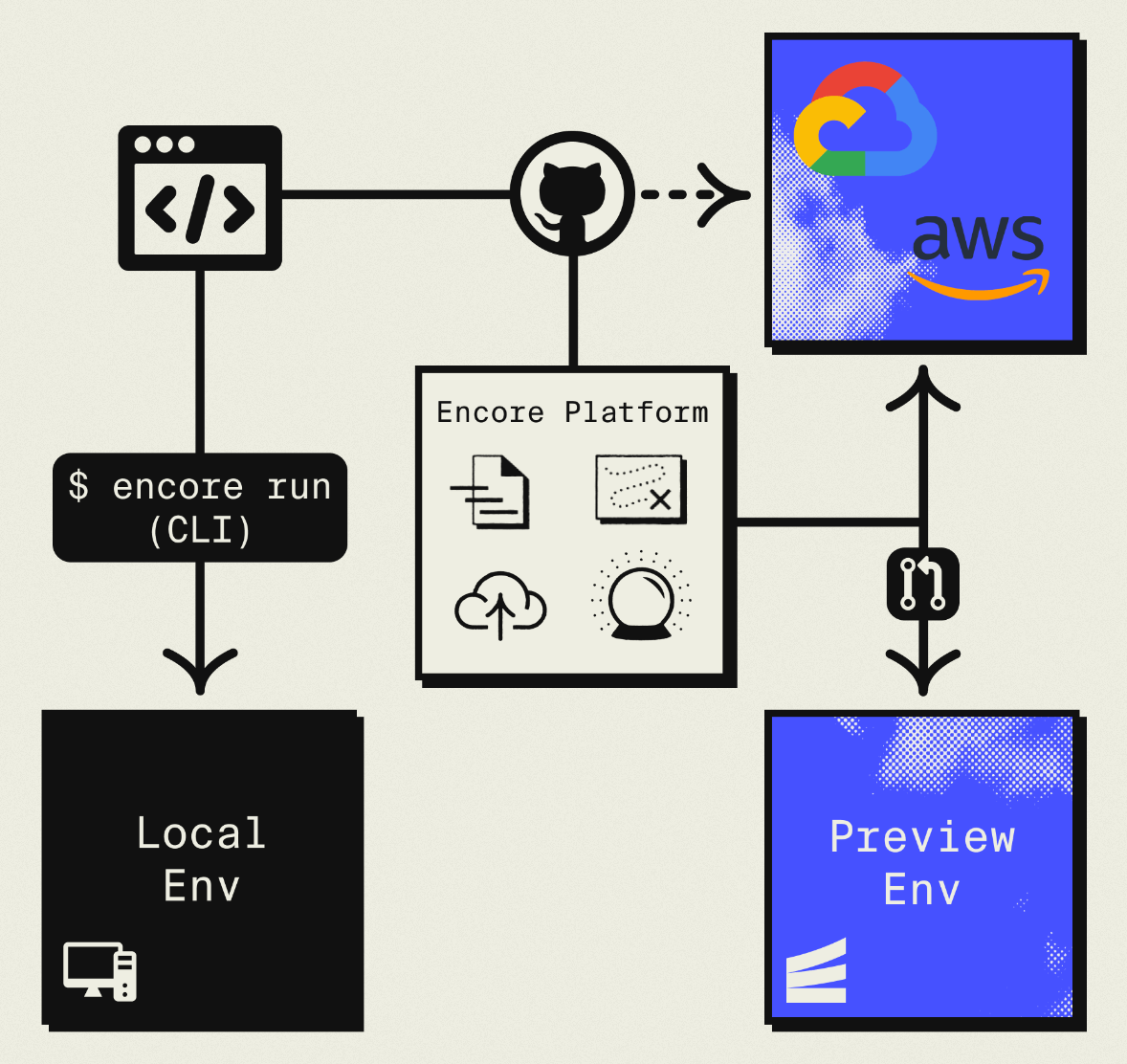

This guide walks through migrating your Vercel backend to your own GCP project using Encore and Encore Cloud. Encore is an open-source TypeScript backend framework (11k+ GitHub stars) where you define infrastructure as type-safe objects in your code: databases, Pub/Sub, cron jobs, object storage. Encore Cloud provisions these resources in your GCP project using managed services like Cloud SQL, GCP Pub/Sub, and Cloud Storage.

The result is GCP infrastructure you own and control, but with a developer experience comparable to Vercel: push code, get a deployment. You don't need to learn Terraform or maintain YAML. Companies like Groupon already use this approach to power their backends at scale.

Your frontend can stay on Vercel. This guide is about moving the backend.

What You're Migrating

| Vercel Component | GCP Equivalent (via Encore) |

|---|---|

| API Routes / Serverless Functions | Cloud Run |

| Edge Functions | Cloud Run (or keep on Vercel for edge logic) |

| Vercel Postgres (Neon) | Cloud SQL PostgreSQL |

| Vercel KV (Redis) | GCP Pub/Sub for queues, Memorystore for cache |

| Vercel Blob | Google Cloud Storage |

| Cron Jobs | Cloud Scheduler + Cloud Run |

Why GCP?

Cloud Run performance: Cloud Run has fast cold starts (often under 100ms for Node.js) and scales to zero when idle. The scaling model is familiar if you're coming from serverless.

Sustained use discounts: GCP automatically reduces costs as usage increases. No reserved capacity purchases required.

GCP ecosystem: Direct access to BigQuery for analytics, Vertex AI for machine learning, Firestore for document storage, and other Google services without cross-cloud networking.

Existing GCP credits: Many startups have GCP credits through Google for Startups or other programs.

Data residency: GCP offers regions that Vercel may not support. Important for compliance requirements.

What Encore Handles For You

When you deploy to GCP through Encore Cloud, every resource gets production defaults: VPC placement, least-privilege IAM service accounts, encryption at rest, automated backups where applicable, and Cloud Logging. You don't configure this per resource. It's automatic.

Encore follows GCP best practices and gives you guardrails. You can review infrastructure changes before they're applied, and everything runs in your own GCP project so you maintain full control.

Here's what that looks like in practice:

import { SQLDatabase } from "encore.dev/storage/sqldb";

import { Bucket } from "encore.dev/storage/objects";

import { Topic } from "encore.dev/pubsub";

import { CronJob } from "encore.dev/cron";

const db = new SQLDatabase("main", { migrations: "./migrations" });

const uploads = new Bucket("uploads", { versioned: false });

const events = new Topic<OrderEvent>("events", { deliveryGuarantee: "at-least-once" });

const _ = new CronJob("daily-cleanup", { schedule: "0 0 * * *", endpoint: cleanup });

This provisions Cloud SQL, GCS, Pub/Sub, and Cloud Scheduler with proper networking, IAM, and monitoring. You write TypeScript or Go, Encore handles the Terraform. The only Encore-specific parts are the import statements. Your business logic is standard TypeScript, so you're not locked in.

See the infrastructure primitives docs for the full list of supported resources.

Step 1: Migrate API Routes

Vercel API routes live in app/api/ (App Router) or pages/api/ (Pages Router). Each file exports HTTP method handlers. With Encore, each endpoint is a typed function.

Before (Next.js API Route):

// app/api/products/route.ts

import { NextResponse } from "next/server";

import { db } from "@/lib/db";

export async function GET() {

const products = await db.query("SELECT * FROM products ORDER BY created_at DESC LIMIT 20");

return NextResponse.json(products.rows);

}

export async function POST(request: Request) {

const { name, price } = await request.json();

const result = await db.query(

"INSERT INTO products (name, price) VALUES ($1, $2) RETURNING *",

[name, price]

);

return NextResponse.json(result.rows[0], { status: 201 });

}

After (Encore):

import { api } from "encore.dev/api";

import { SQLDatabase } from "encore.dev/storage/sqldb";

const db = new SQLDatabase("main", { migrations: "./migrations" });

interface Product {

id: string;

name: string;

price: number;

createdAt: Date;

}

export const listProducts = api(

{ method: "GET", path: "/products", expose: true },

async (): Promise<{ products: Product[] }> => {

const rows = await db.query<Product>`

SELECT id, name, price, created_at as "createdAt"

FROM products

ORDER BY created_at DESC

LIMIT 20

`;

const products: Product[] = [];

for await (const row of rows) {

products.push(row);

}

return { products };

}

);

export const createProduct = api(

{ method: "POST", path: "/products", expose: true, auth: true },

async (req: { name: string; price: number }): Promise<Product> => {

const product = await db.queryRow<Product>`

INSERT INTO products (name, price)

VALUES (${req.name}, ${req.price})

RETURNING id, name, price, created_at as "createdAt"

`;

return product!;

}

);

The main differences:

- Request/response types are declared explicitly — no manual JSON parsing or

NextResponsewrapping - The database connection is declared in code, not pulled from an environment variable

- Path parameters and request bodies are type-safe and extracted automatically

Multiple Services

If you have many API routes, create separate Encore services:

// users/encore.service.ts

import { Service } from "encore.dev/service";

export default new Service("users");

// products/encore.service.ts

import { Service } from "encore.dev/service";

export default new Service("products");

Services call each other with type-safe imports:

import { users } from "~encore/clients";

const user = await users.getUser({ id: order.userId });

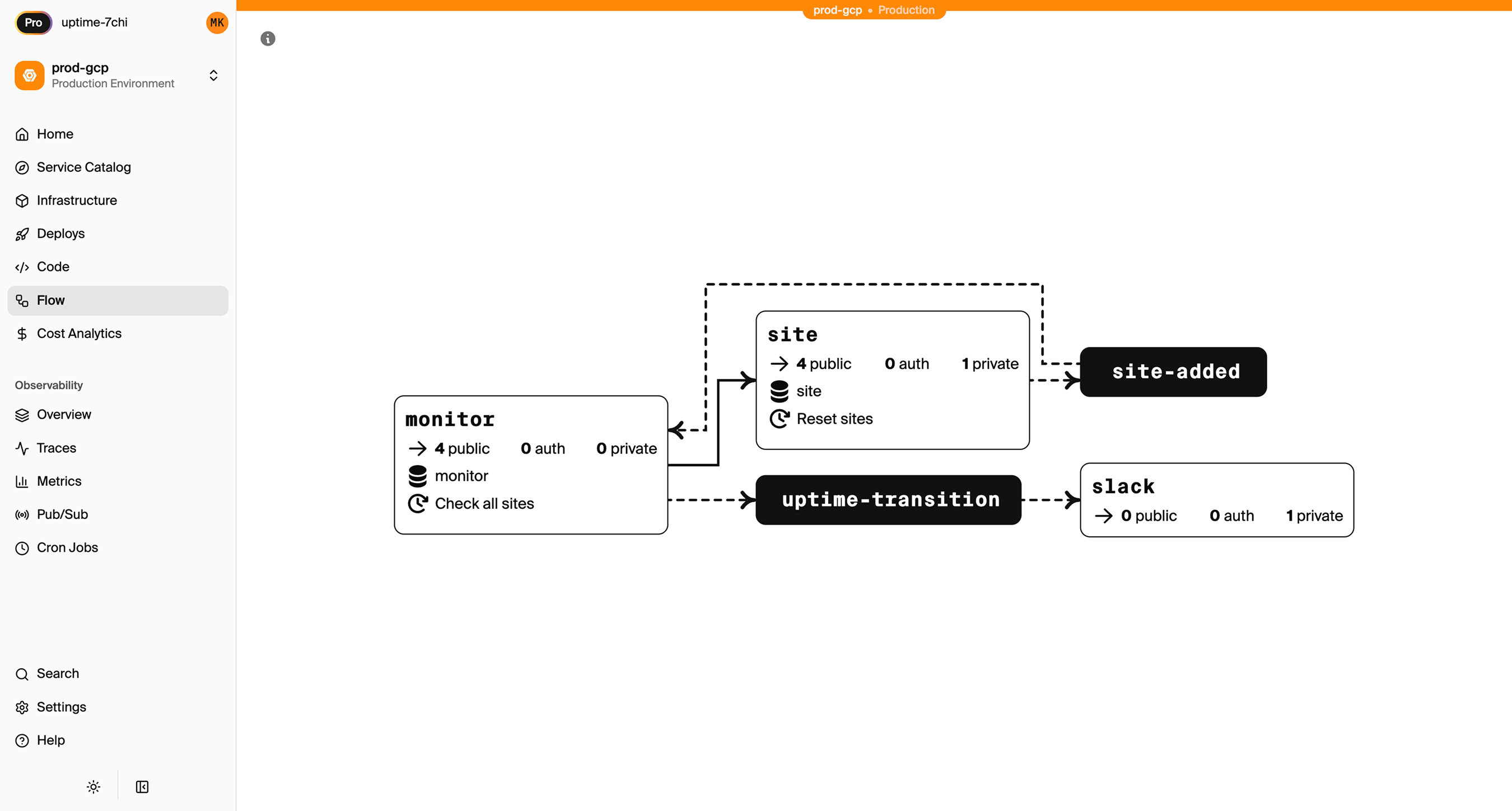

Encore Cloud visualizes how your services connect, including Pub/Sub topics, cron jobs, and database dependencies:

Step 2: Migrate the Database

Vercel Postgres is powered by Neon, which runs PostgreSQL. The migration is Postgres-to-Postgres.

Export from Vercel Postgres

Get your connection string from the Vercel dashboard (Storage > your database), then export:

pg_dump "postgresql://user:pass@ep-xxxx.us-east-2.aws.neon.tech/neondb" > backup.sql

Set Up the Encore Database

import { SQLDatabase } from "encore.dev/storage/sqldb";

const db = new SQLDatabase("main", {

migrations: "./migrations",

});

That's the complete database definition. Encore provisions Cloud SQL PostgreSQL when you deploy.

Put your migration files in ./migrations. If you were using Drizzle or Prisma migrations, convert them to plain SQL files named like 001_create_users.up.sql.

Import to Cloud SQL

After your first Encore deploy:

# Get the Cloud SQL connection string

encore db conn-uri main --env=production

# Import your data

psql "your-cloud-sql-connection" < backup.sql

ORM Compatibility

If you were using Drizzle or Prisma with Vercel Postgres, they work with Encore too. The connection is handled automatically.

Step 3: Migrate Vercel KV

For Job Queues: Use Pub/Sub

Before (Vercel KV with BullMQ):

import { Queue, Worker } from "bullmq";

const emailQueue = new Queue("email", { connection: kvConfig });

await emailQueue.add("welcome", { to: "user@example.com" });

After (Encore Pub/Sub):

import { Topic, Subscription } from "encore.dev/pubsub";

interface EmailJob {

to: string;

subject: string;

body: string;

}

export const emailQueue = new Topic<EmailJob>("email-queue", {

deliveryGuarantee: "at-least-once",

});

// Publish

await emailQueue.publish({

to: "user@example.com",

subject: "Welcome",

body: "Thanks for signing up!",

});

// Process (runs automatically when messages arrive)

const _ = new Subscription(emailQueue, "send-emails", {

handler: async (job) => {

await sendEmail(job.to, job.subject, job.body);

},

});

On GCP, this uses native GCP Pub/Sub with automatic retry and dead-letter handling.

For Caching: Use Encore Cache

import { CacheCluster, StructKeyspace, expireInHours } from "encore.dev/storage/cache";

const cluster = new CacheCluster("main", { evictionPolicy: "allkeys-lru" });

interface UserProfile {

name: string;

email: string;

}

const profileCache = new StructKeyspace<{ id: string }, UserProfile>(cluster, {

keyPattern: "profile/:id",

defaultExpiry: expireInHours(1),

});

Step 4: Migrate Vercel Blob

Vercel Blob becomes Google Cloud Storage:

Before (Vercel Blob):

import { put, del } from "@vercel/blob";

const blob = await put("avatars/user-123.jpg", file, { access: "public" });

After (Encore):

import { Bucket } from "encore.dev/storage/objects";

const avatars = new Bucket("avatars", { versioned: false, public: true });

export const uploadAvatar = api(

{ method: "POST", path: "/avatars/:userId", expose: true, auth: true },

async ({ userId, data, contentType }: {

userId: string;

data: Buffer;

contentType: string;

}): Promise<{ url: string }> => {

const key = `${userId}.jpg`;

await avatars.upload(key, data, { contentType });

return { url: avatars.publicUrl(key) };

}

);

Step 5: Migrate Cron Jobs

Before (vercel.json):

{

"crons": [

{

"path": "/api/cleanup",

"schedule": "0 2 * * *"

}

]

}

After (Encore):

import { CronJob } from "encore.dev/cron";

import { api } from "encore.dev/api";

export const cleanup = api(

{ method: "POST", path: "/internal/cleanup" },

async (): Promise<{ deleted: number }> => {

const result = await db.exec`

DELETE FROM sessions WHERE expires_at < NOW()

`;

return { deleted: result.rowsAffected };

}

);

const _ = new CronJob("daily-cleanup", {

title: "Clean up expired sessions",

schedule: "0 2 * * *",

endpoint: cleanup,

});

On GCP, this provisions Cloud Scheduler to trigger your Cloud Run service.

Step 6: Update Your Frontend

Your Next.js frontend stays on Vercel. Update API calls to point to your Encore backend:

// Before: relative API route

const res = await fetch("/api/users");

// After: Encore backend URL

const res = await fetch("https://api.yourapp.com/users");

CORS Configuration

Since your frontend and backend are now on different domains, configure CORS in your encore.app file:

{

"global_cors": {

"allow_origins_with_credentials": [

"https://yourapp.vercel.app",

"https://yourapp.com"

]

}

}

Step 7: Deploy to GCP

- Connect your GCP project in the Encore Cloud dashboard. See the GCP setup guide for details.

- Push your code:

git push encore main - Run data migrations (database import, file sync)

- Test in preview environment

- Update your frontend to use the new API URL

- Update DNS if using a custom domain

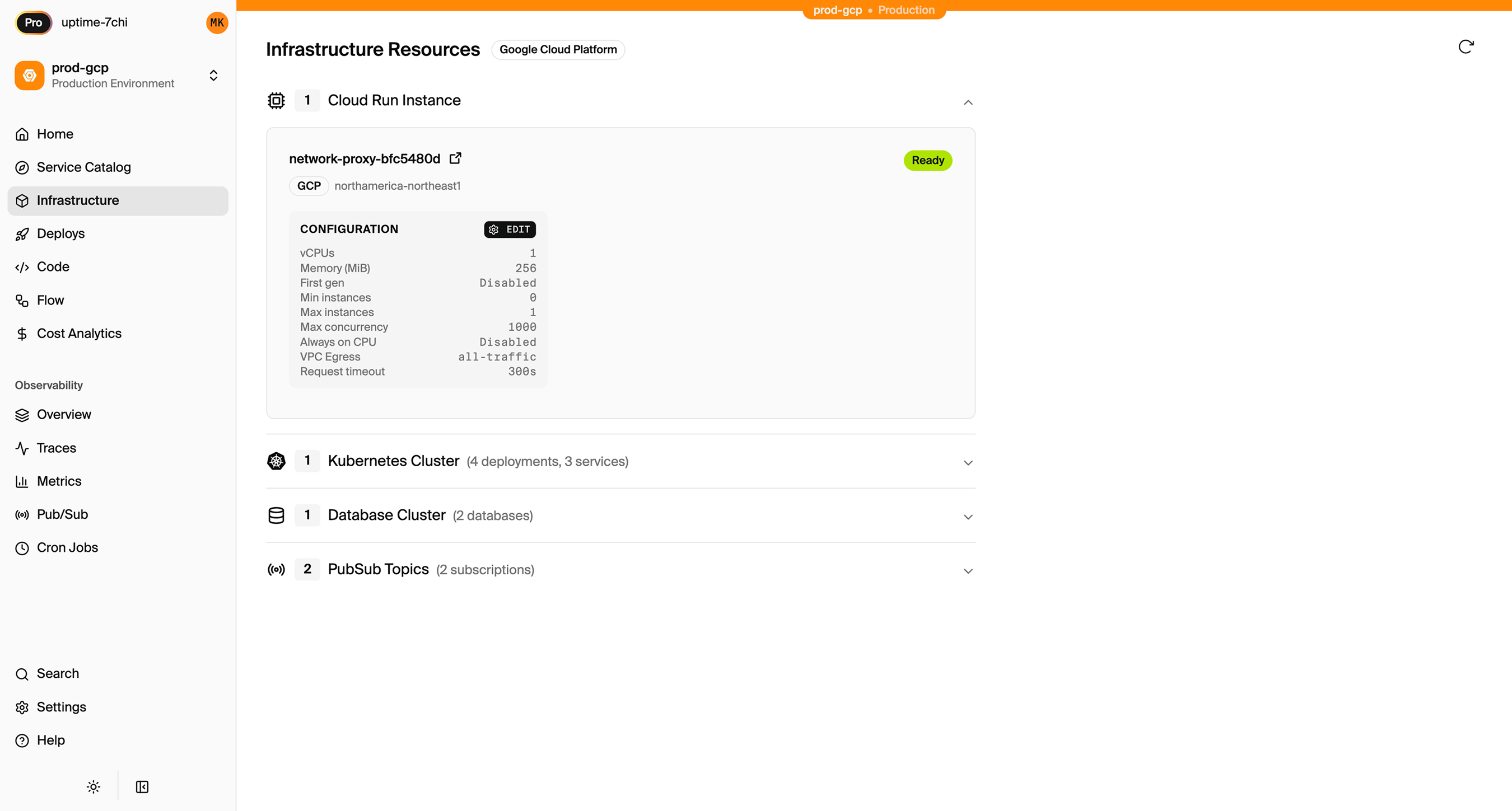

What Gets Provisioned

Encore creates in your GCP project:

- Cloud Run for your APIs (fast cold starts, scales to zero)

- Cloud SQL PostgreSQL for databases

- Google Cloud Storage for file storage

- GCP Pub/Sub for messaging

- Cloud Scheduler for cron jobs

- Cloud Logging for application logs

- IAM service accounts with least-privilege access

You can view and manage these in the GCP Console. Encore Cloud also gives you a dashboard showing all provisioned infrastructure across environments:

Migration Checklist

- Identify all API routes and serverless functions

- Create Encore app with service structure

- Migrate API routes to Encore endpoints

- Export Vercel Postgres database

- Set up Encore database with migrations

- Import data to Cloud SQL

- Migrate Vercel KV usage (cache, queues, sessions)

- Migrate Vercel Blob files to GCS

- Convert vercel.json cron jobs to CronJob

- Update frontend API calls to new backend URL

- Configure CORS for frontend domain

- Test in preview environment

- Update DNS

- Monitor for issues

Wrapping Up

Vercel's backend capabilities are real and improving. For many teams the convenience is worth the trade-off. But if you need infrastructure you own, pricing you control, and a compliance scope that doesn't include a third party managing your cloud resources, running on your own GCP project is the straightforward answer.

Encore handles the GCP provisioning so you're not trading Vercel's abstraction for Terraform's complexity. You get infrastructure in your account, managed through your code, with a developer experience that keeps you moving fast.

Try deploying a TypeScript backend to your own GCP account:

Want to jump straight to a running app? Clone this starter and deploy it to your own cloud.