How AI Agents Deploy Encore Apps to Your AWS Account

What happens between git push and running on Fargate

If you've used an AI coding agent to build a backend, you've probably noticed a gap between what the agent generates and what actually runs in production. The agent writes an Express app with endpoints and database queries, and then someone has to figure out the Dockerfiles, Terraform, IAM policies, and CI/CD pipeline separately. The application code takes minutes. The infrastructure work to deploy it can take the rest of the day.

Part of this is a training data problem, since agents reproduce the patterns they've seen most. Part of it is a tooling problem, since deploying to AWS has always required a different set of skills from writing application code.

Here's what it looks like when an AI agent builds and deploys a backend using Encore.ts, step by step.

The Prompt

You're in Cursor, Claude Code, or any agent-capable editor. You write:

Build me an order management backend with an API for creating and retrieving orders, a PostgreSQL database, and a notification system that sends events when orders are created.

What the Agent Generates

With Encore's AI integration set up (or the MCP server connected), the agent knows the framework conventions. It generates:

// orders/orders.ts

import { api } from "encore.dev/api";

import { SQLDatabase } from "encore.dev/storage/sqldb";

import { Topic } from "encore.dev/pubsub";

// Declares a PostgreSQL database. Encore provisions it per environment.

const db = new SQLDatabase("orders", { migrations: "./migrations" });

// Declares a Pub/Sub topic. Maps to SNS+SQS on AWS, GCP Pub/Sub on GCP.

export const orderCreated = new Topic<OrderEvent>("order-created", {

deliveryGuarantee: "at-least-once",

});

// Defines a type-safe API endpoint. Encore handles routing and validation.

export const create = api(

{ expose: true, method: "POST", path: "/orders" },

async (req: CreateOrderRequest): Promise<Order> => {

const order = await db.queryRow<Order>`

INSERT INTO orders (customer_id, total)

VALUES (${req.customerId}, ${req.total})

RETURNING id, customer_id as "customerId", total`;

await orderCreated.publish({

orderId: order!.id,

customerId: req.customerId,

total: req.total,

});

return order!;

}

);

export const get = api(

{ expose: true, method: "GET", path: "/orders/:id" },

async ({ id }: { id: string }): Promise<Order> => {

return (await db.queryRow<Order>`

SELECT id, customer_id as "customerId", total

FROM orders WHERE id = ${id}`)!;

}

);

// notifications/notifications.ts

import { Subscription } from "encore.dev/pubsub";

import { orderCreated } from "../orders/orders";

// Subscribes to the order-created topic. Encore handles message delivery and retries.

const _ = new Subscription(orderCreated, "send-notification", {

handler: async (event) => {

console.log(`Order ${event.orderId} created, sending notification`);

},

});

-- orders/migrations/001_create_orders.up.sql

CREATE TABLE orders (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

customer_id TEXT NOT NULL,

total NUMERIC NOT NULL,

created_at TIMESTAMPTZ DEFAULT NOW()

);

The output is entirely application code. There are no Dockerfiles, Terraform modules, CI/CD configs, IAM policies, or environment variable files anywhere in the project.

What Happens on encore run

The agent (or you) runs encore run to test locally:

- Encore's Rust-based compiler parses all TypeScript files and builds an Application Model: a graph of every service, API endpoint, database, pub/sub topic, and their dependencies

- A local PostgreSQL instance is provisioned automatically via Docker

- The database migration runs

- Both services start with hot reload

- Pub/sub runs with production-equivalent delivery semantics

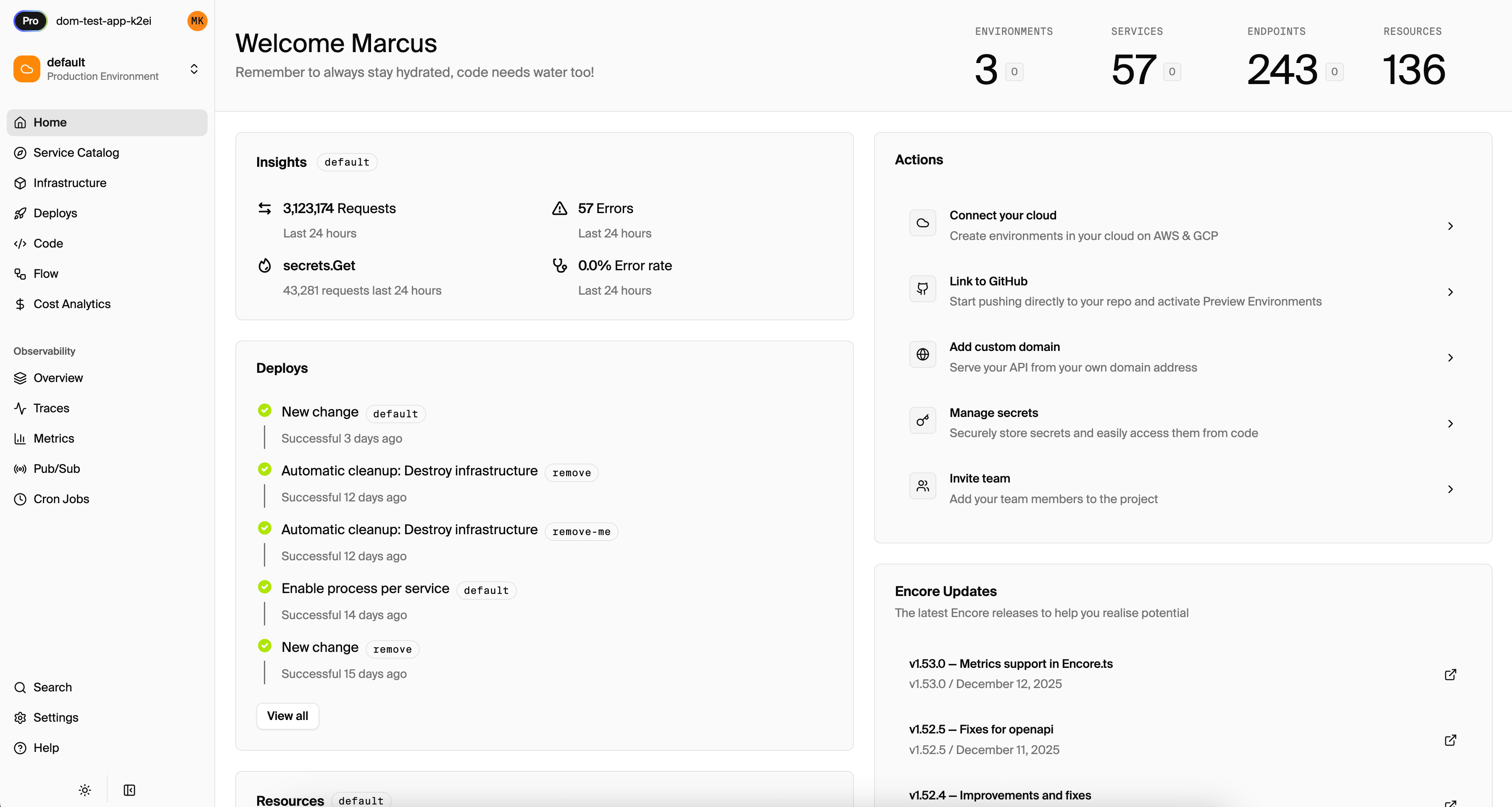

- A local dashboard opens at

localhost:9400

The dashboard gives the agent (or you) an API explorer to test endpoints, distributed traces spanning both services showing the HTTP request through to the subscription handler, a service graph, and a database browser. All of that comes from the framework with zero configuration.

What Happens on git push

When the code is pushed to the Encore Cloud remote:

Step 1: Compile-time analysis. The Rust compiler reads the code and builds the infrastructure graph. From this example, it identifies 2 services, 1 PostgreSQL database, 1 pub/sub topic, 1 subscription, 2 API endpoints, and the dependency between the notifications service and the orders topic.

Step 2: Infrastructure provisioning. Based on the connected AWS account and environment configuration, Encore Cloud provisions:

| Infrastructure component | AWS resource |

|---|---|

| Compute for orders service | Fargate task on ECS |

| Compute for notifications service | Fargate task on ECS |

| Orders database | RDS PostgreSQL with automated backups |

| order-created topic | SNS topic |

| send-notification subscription | SQS queue with dead-letter queue |

| Networking | VPC, subnets, security groups, NAT gateway |

| Access control | IAM roles per service, least-privilege policies |

| Load balancing | Application Load Balancer with target groups |

| Container registry | ECR repositories |

| Logging | CloudWatch log groups |

Step 3: Least-privilege IAM. Because the compiler knows which service accesses which resource, IAM policies are generated with minimal permissions. The orders service can write to the database and publish to SNS. The notifications service can read from SQS. Neither service has permissions it doesn't need, and the policies are derived from code analysis rather than manual policy writing.

Step 4: Deploy. Container images are built, pushed to ECR, ECS services are updated, and the database migration runs. The whole process takes a few minutes.

Why Agents Produce Better Output This Way

When an agent works in a project that uses Express + Docker + Terraform, generating a new feature that needs infrastructure requires changes in three or four places: the application code, the Dockerfile, the Terraform, and the CI/CD pipeline. The configuration layer is where subtle errors compound, like a wrong security group, missing IAM permission, or stale environment variable.

In an Encore project, a new feature that needs a database is one declaration: new SQLDatabase(...). A new pub/sub topic is new Topic(...). A new service is a new directory. The agent writes application logic with typed declarations, and the infrastructure follows from that.

The MCP Server Closes the Loop

Encore's MCP server (encore mcp start) connects directly to AI tools like Claude Code and Cursor. The agent can query your running application to see which services exist, what APIs they expose, what infrastructure they use, and what the latest traces look like. Instead of guessing the project structure, the agent asks the framework.

Combined with the AI integration tools and the skills package (npx add-skill encoredev/skills), the agent has the context it needs to generate correct Encore code on the first attempt.

From Code to Cloud Without the Middle Layer

The deployment path for an AI-generated Encore app is: agent writes code, encore run for local testing, git push for production. The infrastructure configuration, Dockerfile authoring, and Terraform planning steps that normally sit between working code and a running deployment are handled by the framework and platform.

The layer between "code works locally" and "code runs on AWS" was always the hard part. When infrastructure is derived from the code itself, the agent's output goes from local to production without someone manually translating application code into infrastructure configuration.

Want to jump straight to a running app? Clone this starter and deploy it to your own cloud.